Imagine trying to verify a library's contents without reading every single book. That is exactly the problem Data Availability Layers are designed to solve. In the early days of blockchain, networks like Bitcoin and Ethereum handled everything themselves-execution, consensus, and storage. This monolithic approach worked fine when transaction volumes were low. But as demand skyrocketed, these networks hit a wall. They became slow, expensive, and prone to congestion. The solution wasn't just making the existing blocks bigger; it was separating concerns. Enter the modular blockchain architecture, where different tasks are handed off to specialized layers. Among these, the Data Availability Layer (DAL) has emerged as the critical backbone for scaling.

A data availability layer is a dedicated subsystem responsible for storing transaction data and ensuring that this data is publicly accessible for verification. Unlike traditional blockchains where full nodes must download and process every transaction to ensure security, a DAL allows light clients to verify that data exists without downloading the entire dataset. This separation enables execution layers, such as rollups, to process transactions at high speeds while relying on the DAL for secure, verifiable data storage. The concept gained traction around 2021, with Celestia launching its testnet as the first dedicated DAL, followed by Ethereum’s shift toward a rollup-centric roadmap.

The Core Problem: Why We Need Dedicated Data Layers

To understand why DALs matter, you have to look at the "data availability problem." In a trustless system, anyone should be able to join the network and verify its state. Historically, this meant running a full node, which required downloading every block ever created. As block sizes grew, this became impractical for most users. If data is not available, malicious actors could hide fraudulent transactions or censor activity, undermining the network's integrity.

In monolithic blockchains, increasing block size to handle more transactions forces nodes to store more data. This creates a bottleneck. You can either scale throughput and sacrifice decentralization (by requiring powerful hardware) or keep decentralization and accept low throughput. DALs break this trade-off. By offloading data storage to a specialized layer, execution environments can scale independently. For example, Ethereum's current approach limits throughput to roughly 15-45 transactions per second due to gas constraints and storage requirements. A dedicated DAL removes this constraint, allowing for theoretical throughputs of over 100,000 transactions per second.

How Data Availability Layers Work: The Technical Mechanics

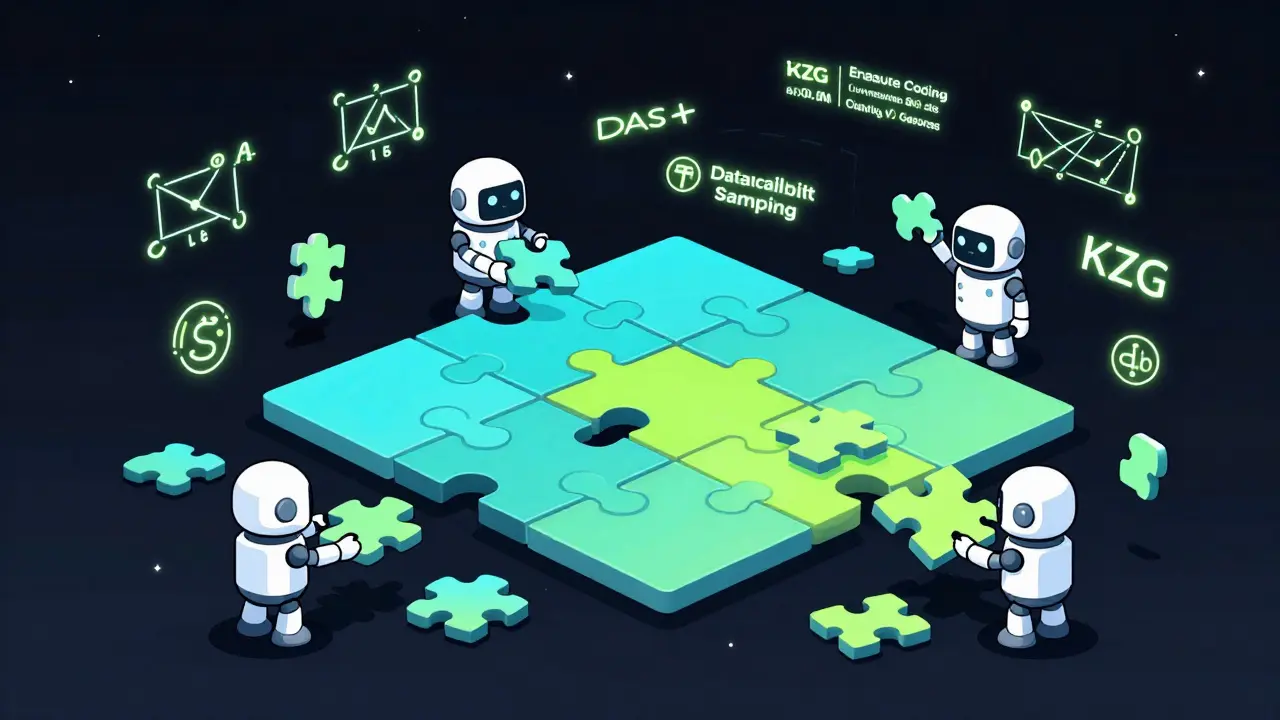

DALs rely on three core cryptographic mechanisms to function efficiently: erasure coding, KZG polynomial commitments, and data availability sampling. These technologies work together to ensure data is stored securely and can be verified quickly.

- Erasure Coding: This technique expands the original data to allow recovery from partial information. For instance, Celestia uses Reed-Solomon coding to double the size of the data. If you lose half of the encoded fragments, you can still reconstruct the original data. This ensures that even if some nodes go offline or act maliciously, the data remains intact.

- KZG Polynomial Commitments: Developed by researchers including Vitalik Buterin and Dankrad Feist, these commitments provide succinct proofs about data properties. Instead of verifying the entire dataset, a user can check a small mathematical proof that confirms the data matches what was published. This drastically reduces computational overhead.

- Data Availability Sampling (DAS): Formalized by Mustafa Al-Bassam, DAS allows light clients to verify data availability by randomly sampling a small subset of data fragments. With just 30-40 samples, a client can achieve 99.9% confidence that the data is available without downloading the whole block. This is the key innovation that makes large-scale decentralized verification possible.

These mechanisms transform how we think about node participation. You no longer need terabytes of storage to participate meaningfully in network security. Light nodes can operate on standard hardware, broadening the base of validators and enhancing decentralization.

On-Chain vs. Off-Chain DAL Approaches

Not all data availability solutions are built the same. The market is currently divided between on-chain approaches, where data is stored on the main blockchain, and off-chain dedicated layers. Each has distinct trade-offs regarding cost, throughput, and security assumptions.

| Feature | On-Chain (e.g., Ethereum Pre-Danksharding) | Off-Chain Dedicated (e.g., Celestia, EigenDA) |

|---|---|---|

| Throughput | Low (15-45 TPS) | High (100,000+ TPS potential) |

| Cost per Transaction | High ($1.23 avg. in late 2023) | Low ($0.0001 estimated) |

| Node Storage Requirement | High (>1 TB) | Low (1-2 GB for light nodes) |

| Security Model | Economic Security (Slashing) | Cryptographic Security + Economic Incentives |

| Ecosystem Maturity | Very High | Growing (Celestia ~15 active rollups in 2023) |

Ethereum’s transition with proto-danksharding (EIP-4844) represents a hybrid step. It introduces "blobs" of data that are cheaper to post than calldata, reducing rollup costs by approximately 90%. However, it still relies on Ethereum’s base layer for availability. Dedicated off-chain solutions like EigenDA leverage restaking via EigenLayer to provide higher throughput at lower costs, though they introduce new trust assumptions regarding the committee operators.

Key Players in the DAL Ecosystem

Several projects are leading the charge in building robust data availability infrastructure. Understanding their differences helps developers choose the right stack for their applications.

Celestia is often cited as the pioneer of dedicated DALs. Its architecture focuses purely on data availability, avoiding execution logic entirely. This specialization allows it to achieve high throughput with minimal resource usage. As of Q3 2023, Celestia processed approximately 1.25 MB per block with finality times of 10-15 seconds. Its Cosmos SDK-based design offers flexibility but requires developers to adapt to non-EVM environments.

Avail takes a multi-layered approach, combining data availability with cross-chain interoperability (Nexus layer) and multi-token security (Fusion layer). This makes it attractive for enterprises looking for broader connectivity options. Polygon’s announcement of an Avail-based solution for enterprise clients highlights its growing appeal in institutional settings.

EigenDA stands out for its integration with Ethereum’s staking ecosystem. By using restaked ETH as collateral, it aims to provide economic security comparable to Ethereum itself. Testnet benchmarks showed impressive performance, handling 100,000 transactions per second. However, its reliance on committees means users must trust that enough honest operators are online to prevent data withholding attacks.

Implementation Challenges for Developers

Building on a modular architecture introduces new complexities. While the benefits of scalability are clear, the learning curve is steep. Developers typically spend 3-4 weeks mastering data availability concepts before feeling comfortable integrating them into production systems.

One major hurdle is tooling maturity. According to a Blockworks survey of 150 developers, 52% cited lack of mature tooling as a significant barrier. Celestia’s GitHub repository shows numerous open issues related to DAS implementation complexity. Similarly, Ethereum’s danksharding efforts face challenges with KZG commitment integration. For teams used to Solidity and EVM tools, moving to Cosmos SDK or custom RPC endpoints requires significant retraining.

Another challenge is interoperability. Moving data between different DALs or from a DAL to an execution layer isn't seamless. The Interchain Foundation’s $5 million research initiative aims to address these gaps, but standardized protocols are still evolving. Developers must carefully consider dispute resolution mechanisms, especially when using Data Availability Committees (DACs), as seen in StarkEx implementations where increased complexity arises during fraud proofs.

Market Trends and Future Outlook

The momentum behind modular blockchains is undeniable. Messari’s September 2023 report projects the DAL market will grow from $1.2 billion in 2023 to $8.7 billion by 2027, a compound annual growth rate of 49%. Investment flows reflect this optimism, with Coinbase investing $50 million in Celestia and Binance Labs launching a $100 million fund for modular infrastructure.

Regulatory frameworks are also catching up. The European Union’s MiCA regulation, effective December 2024, mandates verifiable data availability for transaction records. This requirement likely accelerates adoption among compliant entities seeking transparent audit trails. Gartner predicts that by 2026, 70% of new blockchain applications will utilize modular architectures with dedicated DALs, up from just 15% in 2023.

As the ecosystem matures, we expect greater specialization. Projects like Celestia plan upgrades such as "Arbital" to add validity proofs, further blurring the lines between availability and execution. Meanwhile, Ethereum’s continued refinement of sharding promises to make its native DAL more competitive. The future belongs to those who can balance scalability, security, and usability effectively.

What is a Data Availability Layer?

A Data Availability Layer (DAL) is a specialized subsystem in modular blockchain architecture that stores transaction data and provides cryptographic guarantees that this data is publicly accessible. It separates data storage from execution, allowing networks to scale without forcing every node to process all transactions.

Why do we need Data Availability Layers?

We need DALs to solve the scalability trilemma. Monolithic blockchains struggle to increase throughput because larger blocks require more storage and bandwidth for full nodes. DALs enable trustless scaling by allowing light clients to verify data availability through sampling, thus supporting high-throughput execution layers like rollups.

How does Data Availability Sampling work?

Data Availability Sampling (DAS) allows a verifier to confirm that data is available by randomly checking a small number of data fragments. Using erasure coding, the original data is expanded so that any subset of fragments can reconstruct the whole. With 30-40 samples, a client can achieve 99.9% confidence that the data is present without downloading the entire block.

What is the difference between Celestia and Ethereum's DAL?

Celestia is a dedicated, off-chain DAL focused solely on data availability, offering high throughput and low costs with lightweight nodes. Ethereum's approach is integrated; currently, it uses its main chain for data availability, limiting throughput. However, with proto-danksharding (EIP-4844), Ethereum introduces cheaper data blobs, moving toward a more modular model while maintaining economic security.

Are Data Availability Committees safe?

Data Availability Committees (DACs) offer high performance but introduce centralization risks. They rely on a smaller set of operators to store data. While economically secured through slashing conditions (as seen in EigenDA or StarkEx), they require trust that enough honest operators remain online. Dedicated decentralized DALs like Celestia offer stronger censorship resistance but may have higher latency.

Which DAL should I choose for my project?

Your choice depends on your needs. If you prioritize EVM compatibility and ecosystem maturity, Ethereum (especially post-EIP-4844) is ideal. For maximum throughput and lower costs with a willingness to learn new stacks, Celestia is a strong candidate. For enterprise-grade interoperability, consider Avail. Always evaluate tooling support and community resources before committing.

Write a comment